I'm going to hit you with a number that genuinely stopped me in my tracks the first time I saw it: visitors arriving from AI search convert at 14.2%, versus 2.8% from traditional search. That's 4.4 times more valuable. Not a marginal difference — a completely different ballgame.

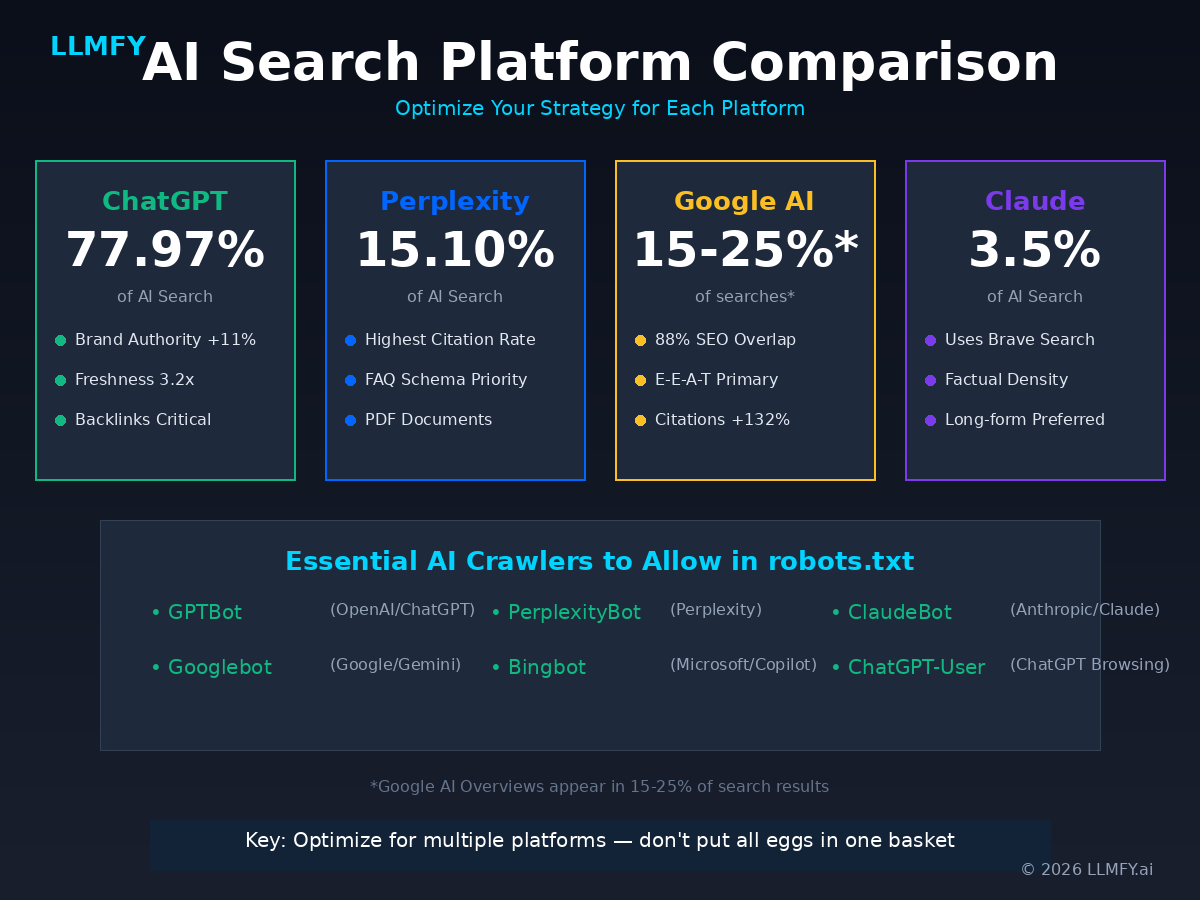

ChatGPT has over 800 million weekly active users. Perplexity processes 100+ million queries daily. Google AI Overviews appear in 15-25% of all searches. And if your content isn't optimized for these systems to find it, understand it, and cite it… you're not just missing traffic. You're missing your highest-converting visitors.

I've been doing SEO for over 18 years and I've lived through plenty of shifts, but nothing as profound as this one. What you're reading is the definitive guide to LLM Optimization (LLMO) — the practice of optimizing your content to be discovered, understood, and cited by AI-powered search engines. It's based on the Princeton GEO research, Semrush's AI Search Study, and our own data from monitoring 200+ websites at LLMFY.

What Is LLM Optimization (and Why It Changes Everything)

LLM Optimization (LLMO) is the process of making your content more visible and citable in AI-powered search engines like ChatGPT, Perplexity, Claude, Google AI Overviews, and Microsoft Copilot.

Here's the fundamental shift you need to internalize:

Traditional SEO asks: "How do I rank #1?" LLMO asks: "How do I become the source AI trusts and cites?"

The difference might seem subtle, but it's massive. LLMs don't rank pages — they cite sources. Being cited is the new "ranking #1." And the criteria for getting cited are very different from the criteria for ranking on Google.

LLMs don't just match keywords. They understand semantic meaning through embeddings, evaluate credibility through multiple signals, synthesize information from multiple sources, and decide which of those sources deserve citation. That requires a fundamentally different optimization approach.

LLMO vs GEO vs AEO: cutting through the alphabet soup

If you've been reading about this space, you've probably seen different terms floating around. Let's bring some order:

| Term | Full Name | Meaning |

|---|---|---|

| LLMO | Large Language Model Optimization | Optimizing for all LLM-powered search (our preferred term) |

| GEO | Generative Engine Optimization | Optimizing for AI that generates responses (same concept) |

| AEO | Answer Engine Optimization | Optimizing for engines that provide direct answers |

| AI SEO | AI Search Engine Optimization | General term for AI search optimization |

They all describe essentially the same practice. We use LLMO because it's technically precise — you're optimizing for Large Language Models, full stop.

Why LLM Optimization Matters in 2026 (and the Data Proves It)

I don't like making claims without backing, so let's go straight to the numbers.

The current state of AI search

| Platform | Monthly Visits | Weekly Active Users | Market Share |

|---|---|---|---|

| ChatGPT | 6.165 billion | 800 million | 77.97% |

| Gemini | ~400 million | ~400 million MAU | 6.40% |

| Perplexity | 153 million | 22 million | 15.10% |

| Claude | ~95 million | 19 million | 3.5% |

| DeepSeek | ~97 million MAU | Variable | 0.37% |

Sources: Similarweb, Business of Apps, November 2025

The value difference nobody's talking about

According to Semrush's AI Search Study, the average AI search visitor is 4.4x more valuable than a traditional organic search visitor. Look at the data:

| Metric | Traditional Search | AI Search Visitor | Difference |

|---|---|---|---|

| Conversion Rate | 2.8% | 14.2% | +407% |

| Time on Page | 5m 33s | 9m 19s | +68% |

| Pages per Session | 2.1 | 3.8 | +81% |

| Value per Visit | $0.42 | $1.85 | +340% |

Why such a dramatic gap? Because users arriving from AI search have been pre-qualified. The AI has already filtered options for them, compared alternatives, and specifically recommended your content. They arrive with higher intent and much clearer expectations. It's premium-quality traffic.

Growth projections: this isn't slowing down

Gartner predicts traditional search volume will drop 25% by 2026 due to AI chatbots. However, our own LLMFY Study 2026: The Future of AI Search provides more detailed projections based on proprietary data from 200+ monitored websites:

- 18% decline in traditional search by end of 2026 (more conservative than Gartner, but still significant)

- AI search surpassing traditional search by Q4 2027

- 4.4x higher conversion rates from AI traffic vs organic

- 35% of information queries will go through AI by 2026

The bottom line is straightforward: if you're not optimizing for LLMs right now, you're leaving your highest-value traffic on the table. And your competition isn't waiting.

How LLMs Actually Work (the Technical Stuff, Made Painless)

To optimize well, you need to understand how these systems process content. I'll keep it as simple as possible without sacrificing accuracy.

The RAG process: how AI finds your content

Most AI search systems use Retrieval-Augmented Generation (RAG). Sounds intimidating, but the concept is pretty logical:

Step 1 — Query processing. The user's question gets converted into a numerical representation called an embedding — essentially coordinates in a high-dimensional semantic space. Think of it as translating the question into the "mathematical language" AI understands.

Step 2 — Retrieval. The system searches its index for content with similar embeddings. This is measured using cosine similarity — how closely two vectors point in the same direction.

Step 3 — Ranking. Retrieved content gets ranked by multiple factors. Based on our data, the approximate weights are: semantic relevance (~40%), keyword match (~20%), authority signals (~15%), freshness (~10%), and source diversity (~15%).

Step 4 — Generation. The LLM synthesizes a response using the top-ranked sources as context.

Step 5 — Citation. Some systems (Perplexity, ChatGPT with browsing, Google AI Overviews) cite the sources they used. That's where you want to be.

What this means for your content

Your content needs to do five things: match semantically (not just keywords, but meaning and intent), be retrievable (technically accessible to AI crawlers), rank highly (strong authority and relevance signals), be extractable (structured for easy information pulling), and deserve citation (unique, authoritative, and genuinely citable).

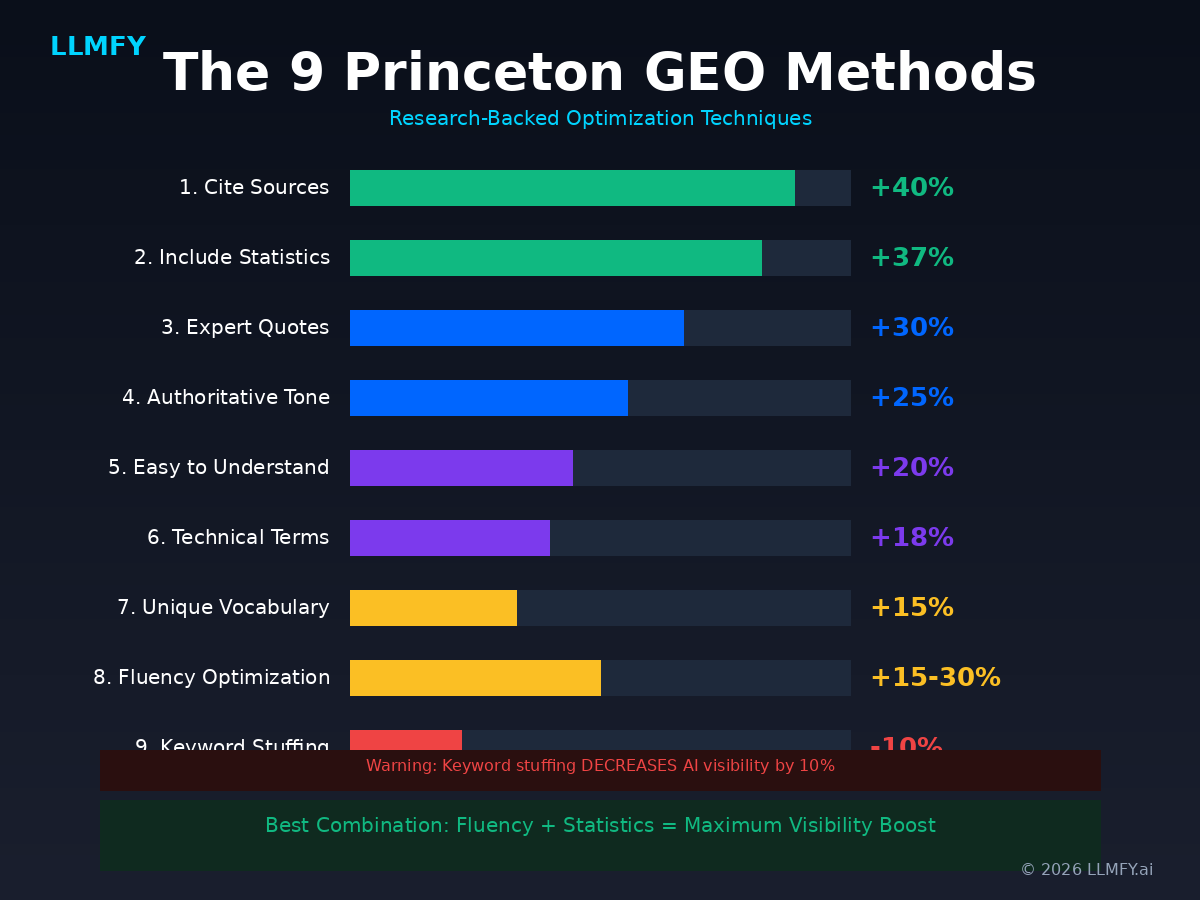

The 9 Princeton GEO Methods (Real Research, Real Data)

This is the part I love because it's actual science. Researchers at Princeton University conducted extensive experiments to identify which optimization methods truly improve AI search visibility. Not opinions, not gut feelings — data.

Here are the 9 methods ranked by effectiveness:

| Method | Visibility Boost | How to Apply |

|---|---|---|

| 1. Cite Sources | +40% | Add authoritative citations and references throughout your content |

| 2. Include Statistics | +37% | Use specific numbers, percentages, and concrete data points |

| 3. Add Expert Quotes | +30% | Include quotes with full attribution (name, title, organization) |

| 4. Authoritative Tone | +25% | Write with confidence; avoid hedging language like "maybe" or "perhaps" |

| 5. Easy to Understand | +20% | Simplify complex concepts; use clear, direct explanations |

| 6. Technical Terminology | +18% | Include domain-specific terms where appropriate |

| 7. Unique Vocabulary | +15% | Increase lexical diversity; avoid repeating the same words |

| 8. Fluency Optimization | +15-30% | Improve readability, narrative flow, and structure |

| -10% | AVOID — Actually hurts visibility |

The most interesting finding: the best combination is Fluency + Statistics. Content that reads naturally while including specific data achieves maximum visibility boost. It's exactly what I'm trying to do in this article.

And the warning nobody wants to hear: keyword stuffing — which still sort of works (badly) in traditional SEO — actually decreases AI visibility by 10%. LLMs detect and penalize repetitive, unnatural content. That technique is dead.

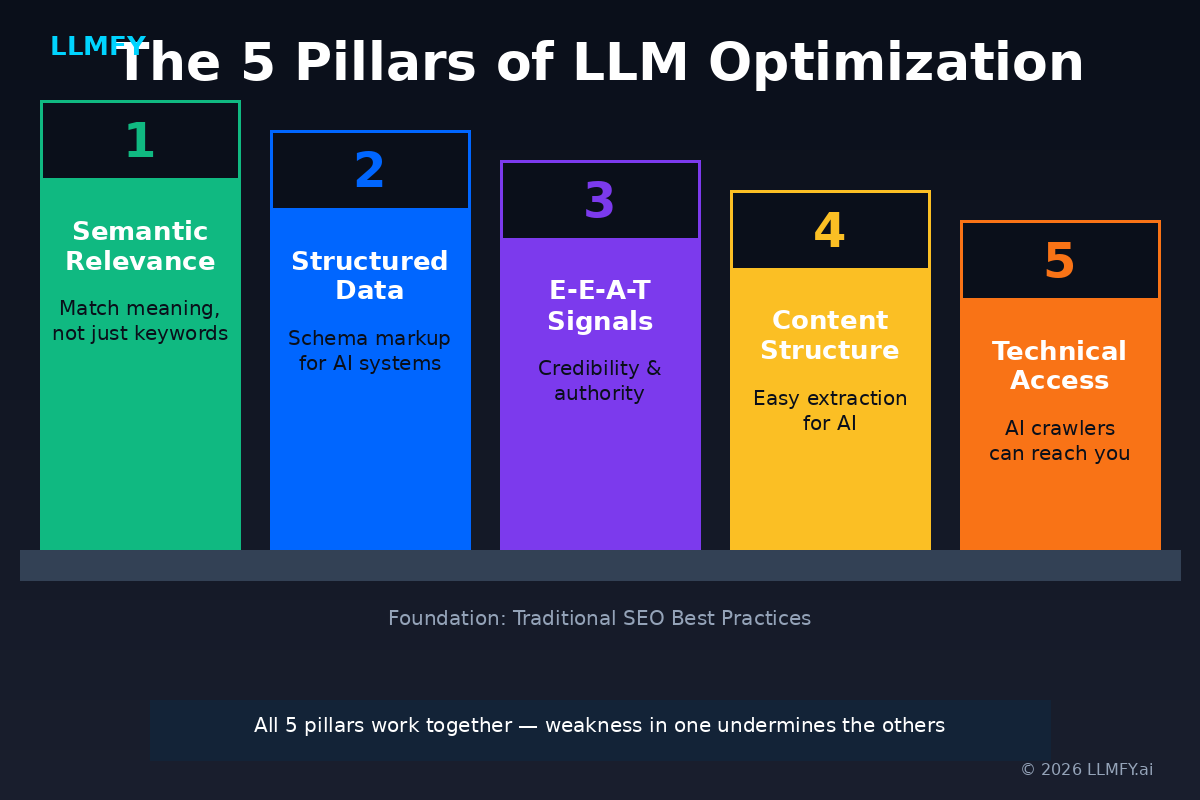

The Five Pillars of LLM Optimization

Based on the Princeton research, our analysis of top-performing content, and data from 200+ monitored sites, effective LLMO rests on five pillars. Let me walk you through each one.

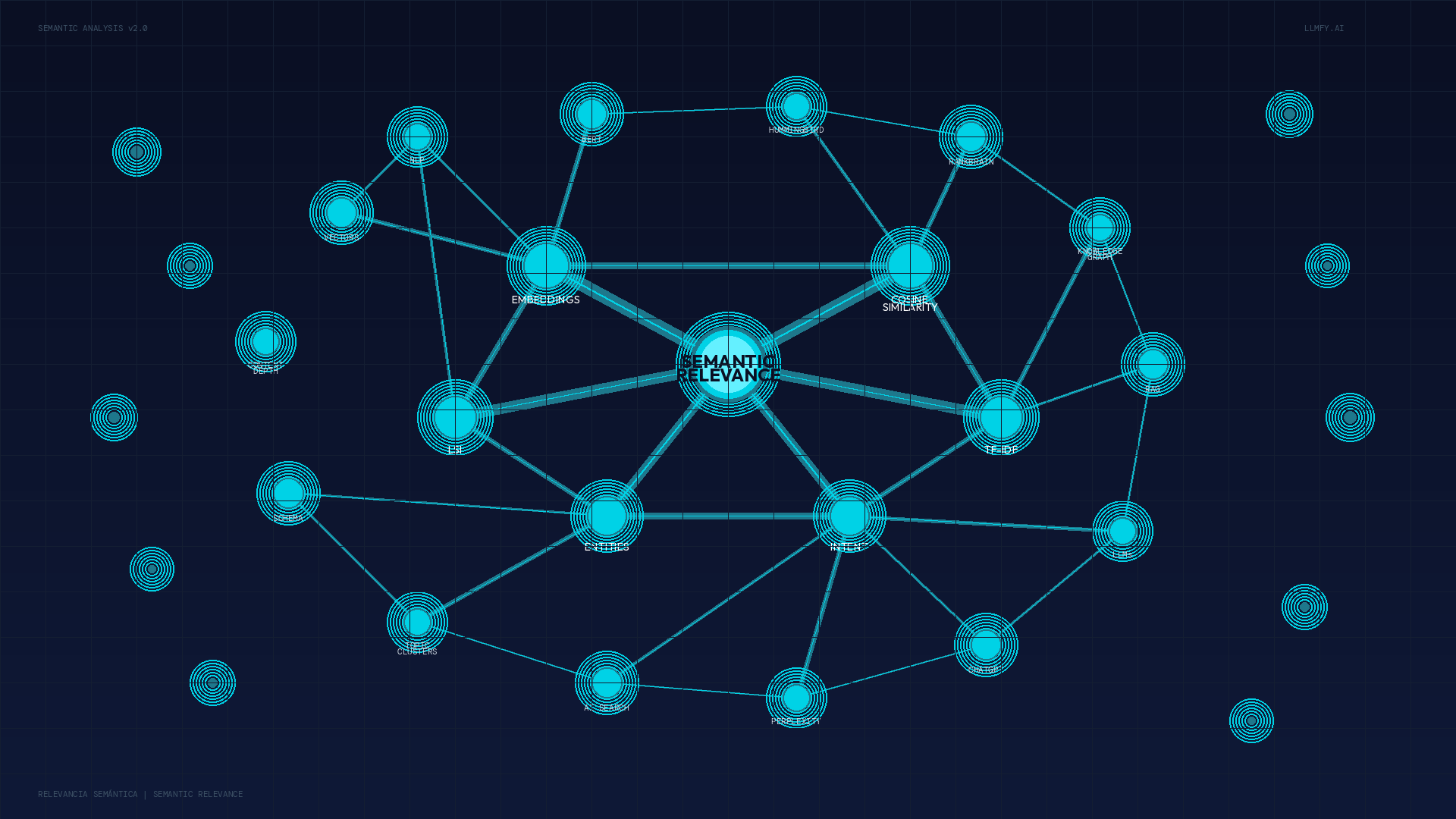

Pillar 1: Semantic Relevance

Semantic relevance measures how well your content aligns with the actual meaning and intent behind a query — not just the keywords.

LLMs use embeddings to match content to queries. Content that covers topics comprehensively, uses precise terminology, and addresses user intent produces stronger embeddings. It's that direct.

The strategies that work best: build comprehensive topic clusters around your expertise, cover topics holistically by addressing related questions, use precise and consistent terminology, include original data and expert insights, and structure content for easy information extraction.

There's something I call "the entity connection" that most people overlook: LLMs cluster related concepts together. Your brand becomes associated with topics through consistent mention alongside those topics. If you want to be cited for "LLM optimization," you need extensive, high-quality content about LLM optimization on your site AND mentions from other authoritative sources.

📖 Deep Dive: Semantic Relevance: The Complete Guide to Ranking in Google and AI Search Engines

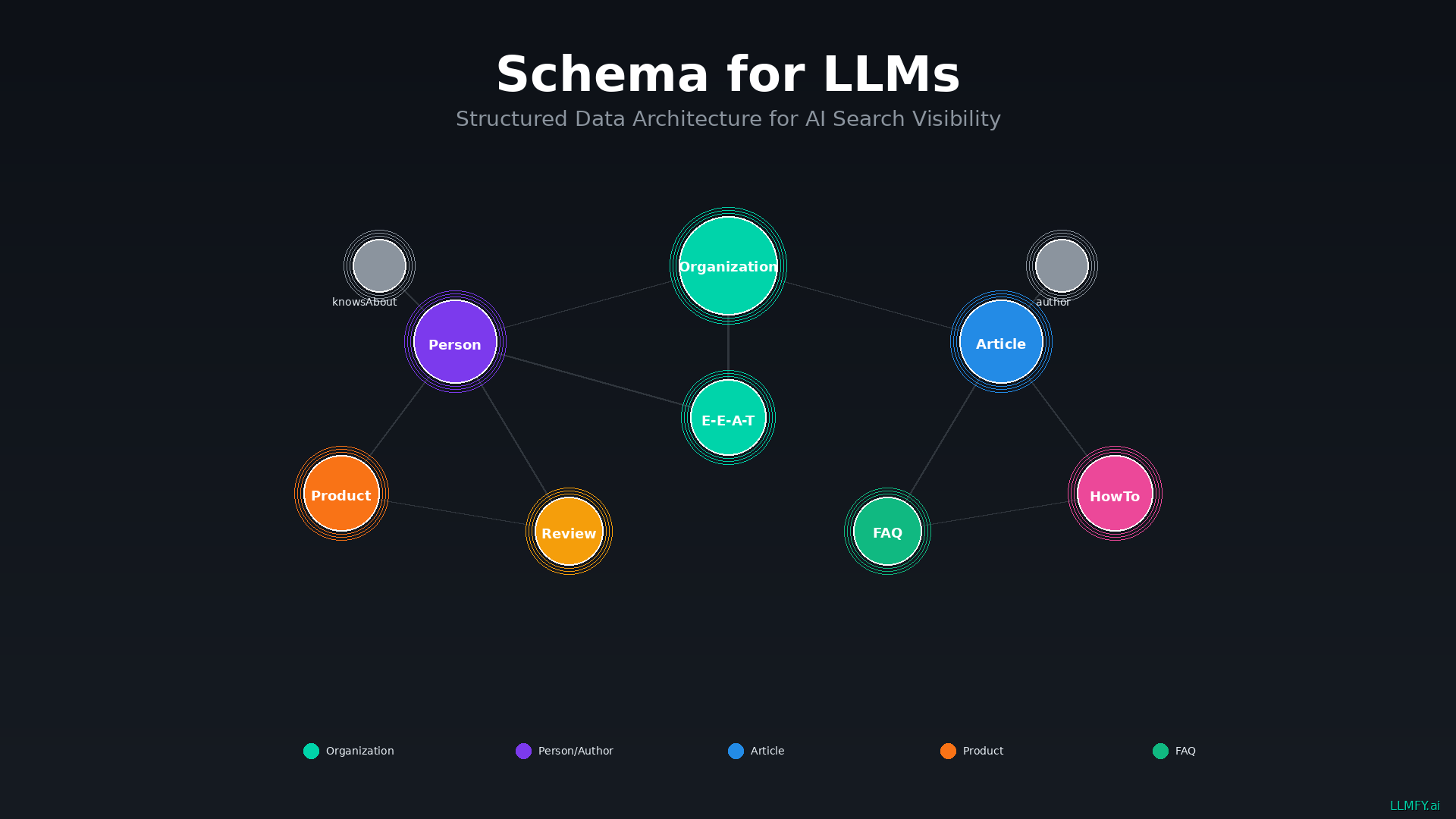

Pillar 2: Structured Data & Schema

Schema markup provides explicit signals about your content's meaning, authorship, and credibility. While traditional SEO uses schema for rich snippets, in LLMO we use it to communicate directly with AI systems.

Essential schema types for LLMO:

| Schema Type | Purpose | LLMO Impact |

|---|---|---|

| Organization | Establishes business entity | Entity recognition |

| Person | Builds author entity | E-E-A-T signals |

| Article | Content metadata | Freshness, authorship |

| FAQPage | Pre-formatted Q&A | +40% AI visibility |

| HowTo | Structured procedures | Step extraction |

| Product | Product information | Commercial queries |

| Review | User testimonials | Trust signals |

📖 Deep Dive: Schema for LLMs: The Complete Guide to Structured Data for AI Search Engines

Pillar 3: E-E-A-T Signals

Experience, Expertise, Authoritativeness, and Trustworthiness determine whether LLMs trust your content enough to cite it. In AI search, credibility is everything. I'm not exaggerating.

How LLMs evaluate each component:

| Signal | What LLMs Look For |

|---|---|

| Experience | First-hand knowledge, case studies, original data |

| Expertise | Author credentials, technical accuracy, depth |

| Authority | Backlinks, mentions, citations from other sources |

| Trust | Accuracy, transparency, consistent information |

Practical implementation I recommend: create detailed author pages with verifiable credentials, include original research and real case studies, get mentioned and cited by authoritative sources, maintain consistent and accurate information across your site, and show your methodology by always citing your sources.

📖 Deep Dive: E-E-A-T for LLMs: Why Experience, Expertise, Authority & Trust Matter for AI Search

Pillar 4: Content Structure for AI Extraction

This is something a lot of people underestimate. LLMs don't read like humans. They process information in chunks and depend on structural cues to understand your content. Optimizing structure dramatically improves your citability.

| Element | Recommendation | Why It Matters |

|---|---|---|

| Paragraphs | 1-3 sentences max | Easy passage extraction |

| Headings | Clear H1 > H2 > H3 hierarchy | Content roadmap for AI |

| Lists | Bullets for features, numbers for steps | Structured information |

| Tables | Comparison data in tables | Highly extractable format |

| Definitions | Clear, quotable definitions | Direct answer potential |

| TL;DR | Summary at top of long content | Immediate value signal |

A tip I apply consistently: answer-first format. Put your main answer at the beginning, then support with details and context. This matches how LLMs extract information for citations.

Pillar 5: Technical Accessibility

Seems obvious, but you'd be surprised how many sites accidentally block AI crawlers. LLMs can only cite content they can actually access.

AI crawler user-agents you should allow:

| User-Agent | AI System |

|---|---|

| Googlebot | Google / Gemini |

| Bingbot | Bing / Microsoft Copilot |

| GPTBot | OpenAI / ChatGPT |

| ChatGPT-User | ChatGPT with browsing |

| PerplexityBot | Perplexity |

| ClaudeBot | Claude / Anthropic |

| anthropic-ai | Claude / Anthropic |

| Bytespider | TikTok / ByteDance |

Platform-Specific Optimization

Each AI platform has its own quirks. Optimizing for ChatGPT isn't the same as optimizing for Perplexity or Claude. Here are the differences that actually matter.

ChatGPT Optimization

ChatGPT dominates with 77.97% market share. It's the 800-pound gorilla in the room.

| Factor | Impact | Action |

|---|---|---|

| Brand Domain Authority | +11% citations | Build branded presence |

| Content Freshness | 3.2x more citations | Update within 30 days |

| Backlink Profile | 8.4 avg citations | Invest in link building |

A stat that genuinely surprised me: ChatGPT cites pages ranking position 21+ about 90% of the time. That means traditional rankings matter far less than you'd think for this platform. What does matter: comprehensive, authoritative, freshly updated content.

Perplexity Optimization

Perplexity has the highest citation rate among AI search engines. If you want to get cited, this platform is your best bet.

| Factor | Impact | Action |

|---|---|---|

| PerplexityBot Access | Required | Allow in robots.txt |

| FAQ Schema | Higher citation | Implement FAQPage schema |

| PDF Documents | Prioritized | Host downloadable PDFs |

Something I discovered working with clients: Perplexity noticeably prioritizes PDFs. If you have important content, create a downloadable PDF version. FAQ sections also get cited at higher rates.

Google AI Overviews Optimization

Google AI Overviews appear in 15-25% of searches. The interesting thing is there's an 88% overlap between domains appearing in AI Overviews and those ranking in traditional top results. Translation: SEO still matters here.

| Factor | Impact | Action |

|---|---|---|

| Traditional SEO | 88% overlap with AIO domains | Maintain strong SEO |

| E-E-A-T | Primary factor | Build verifiable expertise |

| Structured Data | Higher inclusion | Implement comprehensive schema |

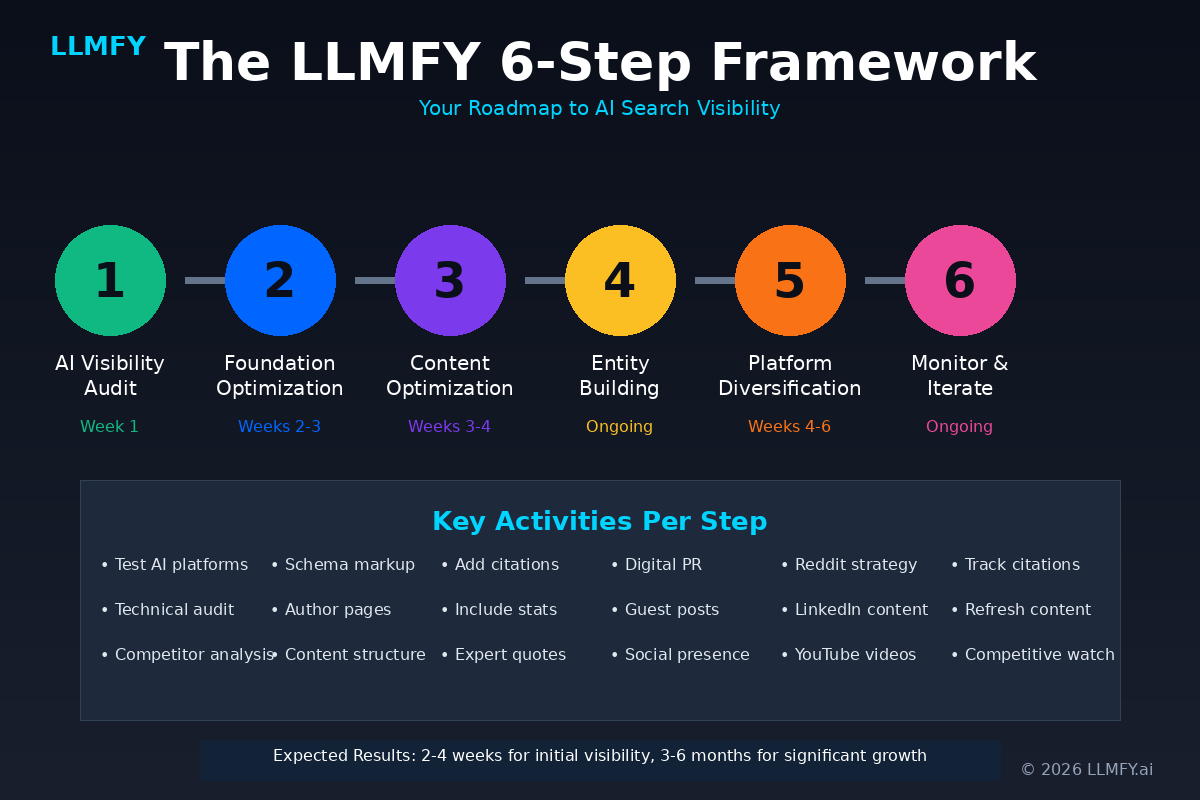

The LLMFY Framework: 6-Step Implementation

This is the framework we use internally and have refined with every project. It's not theory — it's what we apply with real clients.

Step 1: AI Visibility Audit (Week 1)

Before optimizing anything, you need to know where you stand. Search your brand on ChatGPT, Perplexity, and Claude. Ask the questions your content should answer. Document what each platform says about you. Identify incorrect information or gaps you need to fill.

This sounds tedious, I know. But I promise you, the discoveries you make in this phase are eye-opening. More than one client has been unpleasantly surprised at what AI says (or doesn't say) about them.

Step 2: Foundation Optimization (Weeks 2-3)

This is where you build the technical base: add Organization, Person, and Article schema to your site, create detailed author pages, implement FAQPage schema on relevant pages, and improve the structure of your existing content.

Step 3: Content Optimization (Weeks 3-4)

For each important page, apply the Princeton methods: add 3-5 authoritative citations, include specific statistics, incorporate expert quotes with full attribution, write in an authoritative but accessible tone, simplify complex concepts without losing accuracy, and improve readability and narrative flow.

Step 4: Entity Building (Ongoing)

This is the part that requires patience. Guest posts on authoritative sites, digital PR to get brand mentions, authentic presence on Reddit and Quora (no spamming, please), and review and testimonial acquisition.

Step 5: Platform Diversification (Weeks 4-6)

Don't put all your eggs in one basket. Reddit strategy with genuine, value-adding responses. LinkedIn articles and posts. YouTube videos with transcripts. Important data point: Reddit and Quora are the most cited domains in Google AI Overviews. Having authentic presence on these platforms can dramatically boost your AI visibility.

Step 6: Monitoring and Iteration (Ongoing)

Monthly AI visibility audits, quarterly content refresh for top pages, and constant competitive monitoring. LLMO isn't a one-and-done project — it's an ongoing process, just like SEO.

Common LLMO Mistakes I See Over and Over

After working with 200+ sites, there are error patterns that repeat with alarming frequency:

| Mistake | Impact | Instead Do |

|---|---|---|

| Keyword stuffing | -10% visibility | Use natural language |

| Anonymous authorship | Weak E-E-A-T | Create detailed author pages |

| No schema markup | Missed signals | Implement comprehensive schema |

| Outdated content | Low citation rate | Add timestamps, update regularly |

| Blocking AI crawlers | Zero visibility | Allow GPTBot, PerplexityBot, etc. |

Measuring Your LLMO Success

Traditional SEO metrics don't fully capture LLMO performance. You need to measure new things:

| Metric | What It Measures | How to Track |

|---|---|---|

| AI Citation Frequency | How often you're cited | Manual monitoring + tools |

| Brand Mentions | Appearances in AI responses | Search your brand in AI platforms |

| AI Overview Inclusions | Google AIO appearances | SERP tracking tools |

| AI Referral Traffic | Visits from AI platforms | GA4 referrer data |

The Future of LLM Optimization

Without getting into wild predictions, there are trends already materializing: AI search will hit 35% of information queries in 2026, credibility will become absolutely paramount as AI faces more accuracy scrutiny, multimodal optimization for images, video, and audio will grow increasingly relevant, and agentic search — where AI takes actions on behalf of users — is already starting to emerge.

Organizations investing in LLMO now will have significant advantages. Entity recognition compounds over time, content libraries matter more and more, and trust is hard to build but incredibly valuable once you have it.

Start Optimizing for AI Search Today

LLM Optimization is no longer optional. It's essential for content visibility in 2026 and beyond.

The five pillars work together:

- Semantic Relevance — Match meaning, not just keywords

- Structured Data — Communicate directly with AI systems

- E-E-A-T Signals — Build verifiable credibility

- Content Structure — Make extraction easy for AI

- Technical Accessibility — Ensure AI can reach your content

LLMFY provides comprehensive tools for LLM Optimization: AI Visibility Audit to see how AI search engines perceive your content, Schema Scanner to audit your structured data implementation, E-E-A-T Analyzer to assess your credibility signals, and Citation Tracker to monitor your mentions across AI platforms.

Start Your Free AI Visibility Audit

Discover exactly how ChatGPT, Perplexity, Claude, and Google AI Overviews see your content — and get concrete recommendations to improve your citations.

Frequently Asked Questions

What's the difference between LLMO, GEO, and AEO?

These terms describe essentially the same practice: optimizing content for AI-powered search engines. LLMO is technically precise since you're optimizing for Large Language Models. GEO emphasizes the generative aspect. AEO focuses on engines providing direct answers. Use whichever term your audience understands best.

Is traditional SEO still important if I'm doing LLMO?

Without question. There's an 88% overlap between domains appearing in Google AI Overviews and traditional top search results. Strong SEO provides the foundation for strong LLMO. And the good news is that most LLMO best practices also improve your traditional SEO.

How long does it take to see results from LLMO?

Initial improvements can appear within 2-4 weeks of implementing structural and schema changes. But building entity recognition and solid E-E-A-T signals is a longer process — typically 3-6 months for significant improvement. The content that gets cited most tends to be updated within the last 30 days.

Should I block AI crawlers?

If your goal is AI visibility, blocking crawlers eliminates your chance of citation. You can selectively block if you're concerned about training on your content without compensation, but be aware of the tradeoffs.

What's the most important factor for getting cited?

According to the Princeton research, adding authoritative citations to your own content provides the largest boost (+40%). The combination of natural writing flow + specific statistics produces the maximum overall increase. But the foundation is having comprehensive, accurate content that genuinely deserves to be cited.

Sources and References

- Semrush - AI Search SEO Traffic Study (2025) - https://www.semrush.com/blog/ai-search-seo-traffic-study/ — Study on the impact of AI traffic on conversion metrics and user behavior.

- Princeton University - GEO: Generative Engine Optimization, KDD 2024 - https://arxiv.org/abs/2311.09735 — Research by Aggarwal et al. on the 9 optimization methods for generative engines, with data showing up to +40% visibility boost.

- Semrush - AI Overviews Study: What 2025 SEO Data Tells Us (2025) - https://www.semrush.com/blog/semrush-ai-overviews-study/ — Analysis of 10M+ keywords on AI Overview impact on CTR, zero-click rates, and industry visibility.

- Semrush - How Google's AI Mode Compares to Traditional Search and Other LLMs (2025) - https://www.semrush.com/blog/ai-mode-comparison-study/ — Comparative study of Google AI Mode, ChatGPT and Perplexity with domain overlap data (88%) and citation patterns.

- Similarweb - Traffic Data for AI Platforms (November 2025) — Monthly traffic data and market share for ChatGPT, Perplexity, Claude and Gemini.

- Business of Apps - ChatGPT Statistics (2025) - https://www.businessofapps.com/data/chatgpt-statistics/ — Updated active user statistics and ChatGPT growth data.

- Google - AI Overviews Documentation - https://developers.google.com/search/docs/appearance/ai-overviews — Official Google documentation on AI Overview behavior and triggers.

- Google Search Quality Evaluator Guidelines - https://guidelines.raterhub.com/ — Official quality evaluation guidelines defining E-E-A-T standards.

- Schema.org - Official Documentation - https://schema.org — Official schema type documentation for structured data.

- Gartner - Search Engine Volume Prediction (2024) — Prediction of 25% decline in traditional search volume by 2026.

- First Page Sage - Generative Engine Optimization Strategy Guide (2025) - https://firstpagesage.com/seo-blog/generative-engine-optimization-geo-strategy-guide/ — Independent study of 11,000+ AI chatbot queries analyzing algorithmic ranking factors by platform.

- LLMFY Internal Data - AI Visibility Monitoring (200+ sites, 2025-2026)

Tags

Jesus LopezSEO

LLMO Expert & Founder of LLMFY

SEO expert with over 18 years of experience. Pioneer in LLMO (Large Language Model Optimization) and founder of Posicionamiento Web Systems. Helping companies optimize their presence in traditional search engines and AI search engines.