I'm going to be upfront with you: if your content doesn't communicate E-E-A-T — Experience, Expertise, Authoritativeness, and Trustworthiness — language models like ChatGPT, Claude, and Perplexity will skip right over it without a second thought. Doesn't matter how well-written it is or how perfectly you've placed your keywords. If the AI doesn't sense there's someone credible behind the content, it simply won't cite you.

I've been doing SEO for over 18 years and I've seen plenty of paradigm shifts. But this one feels different. Google originally designed the E-E-A-T framework so that human quality raters could score search results. It was an internal guide, almost a training manual. What nobody anticipated is that this same concept would become the currency of AI-powered search.

Because when LLMs have to decide who to cite among dozens of sources covering the same topic, they don't flip a coin. They look for credibility signals. And those signals are, essentially, E-E-A-T.

In this guide, I'll explain why this matters more than ever and — more importantly — what you can actually do to make your content pass the AI's trust filter.

What Is E-E-A-T and Why Does AI Use It as a Filter?

Before we get into strategies, let's make sure we're on the same page about what we're dealing with.

The Four Pillars, No Sugarcoating

E-E-A-T represents four quality signals that Google formalized in December 2022 when they added the first "E" for Experience to the original framework. Each pillar brings something different to the table:

Experience means having skin in the game. A product review written by someone who's used the thing for six months carries a weight that someone who merely read the spec sheet can't match. LLMs have learned to spot the kind of details that only show up when someone has actually lived what they're writing about: specific timeframes, unexpected problems that came up, nuances you won't find in a generic description.

Expertise is knowing what you're talking about, and making it obvious. For technical topics, we're talking about qualifications, certifications, years of practice. For everyday subjects, it's demonstrated through depth of knowledge and consistent accuracy. What's interesting is that LLMs are particularly good at detecting expertise because they can cross-reference your content against millions of sources to check whether what you're saying actually holds up.

Authoritativeness goes a step further — it's not just what you say about yourself, but what others say about you. Do other sites in your industry cite you? Do reputable publications mention you? Do you show up as a reference when people discuss your topic? For AI systems synthesizing information from multiple sources, authoritativeness acts as a powerful filter for deciding whose voice deserves amplification.

Trustworthiness is the foundation everything else rests on. You can have experience, expertise, and authority, but if you've published inaccurate information, if you're not transparent about your sources, or if your track record isn't clean, trust collapses. And when trust falls apart, LLMs simply discard you. It's the ultimate tiebreaker.

Why Google Created It and Why AI Adopted It

Google's Search Quality Evaluator Guidelines were designed for humans. But it turns out that the same signals that make a human evaluator trust a source are the signals that LLMs also learn to recognize during training.

There are three reasons this works. First: LLMs train on data where strong E-E-A-T content tends to be more accurate, more thorough, and more frequently cited by other sources. The model picks up on those correlations even though nobody explicitly told it "look for E-E-A-T." Second: many AI search systems — Google AI Overviews, Bing's Copilot — pull directly from traditional search infrastructure where E-E-A-T already influences rankings. Third: E-E-A-T's fundamental goal (reliable information from credible sources) is exactly what LLMs need to generate answers that aren't fabricated.

How LLMs Evaluate Each E-E-A-T Pillar

This is the part I find most fascinating: AI doesn't assess E-E-A-T the way a human would, but it's developed its own methods for measuring credibility. And they're more sophisticated than most people realize.

Experience: AI Can Tell If You've Lived It or Just Copied It

LLMs have gotten remarkably good at distinguishing between content that reflects genuine experience and content that merely reshuffles what already exists online. How? Through patterns. Experiential content includes specific details, personal observations, conclusions you can only reach when you've actually been through something, nuances that generic content simply doesn't have.

Let me give you an example from my own work. When I write about LLM optimization, I mention specific mistakes I made with clients, tools I tested that didn't deliver, real implementation timelines. Someone who's read three articles on the topic can't produce that kind of content.

For AI search, the implication is clear: content demonstrating first-hand experience will increasingly outperform surface-level summaries. Include real anecdotes, honest assessments (negative ones too), and details that only someone who's been there would know.

Expertise: Saying It Isn't Enough — You Have to Show It

Expertise signals help LLMs decide whether a source has the depth needed to be accurate. AI evaluates this through multiple channels: consistency of information across your content, depth of explanations, correct use of technical terminology, and the ability to address complex nuances without oversimplifying.

One aspect I think is crucial is internal consistency. If you have ten articles about SEO and three of them contradict the other three, the LLM picks up on that inconsistency and downgrades your expertise score. It's not a conscious decision — the model has simply learned that reliable sources are coherent with themselves.

My advice? Create thorough content that tackles topics in depth. Show that you understand the exceptions and edge cases. And maintain consistency across your entire content portfolio. Credentials and qualifications should be visible — not as bragging, but as context that helps both humans and machines understand the basis for your authority.

Authoritativeness: What Matters Is What Others Say About You

Authoritativeness is probably the toughest E-E-A-T pillar to build because it depends heavily on external recognition. You can't manufacture it by yourself. LLMs evaluate authoritativeness by analyzing how other sources reference you, cite you, and talk about you.

The signals that contribute: quality backlinks from relevant sources, mentions in industry publications, citations in professional contexts, positive reviews and testimonials, and generally positive sentiment when your brand or name comes up in conversation. AI aggregates all these signals to form a comprehensive picture.

Something important that many people don't realize: authoritativeness is topic-specific. You can be an absolute authority in digital marketing and have zero authority on medical topics. LLMs are getting better at recognizing these boundaries. So focus your authority-building efforts on your actual territory of expertise — don't try to claim authority across everything.

Trustworthiness: The Final Filter AI Applies Without Mercy

Trust works as the ultimate filter. The LLM might recognize that a source has experience, expertise, and authority, but if trust signals are weak or negative, it can still exclude you.

LLMs assess trust across several dimensions. Accuracy gets checked by cross-referencing your claims against other sources. Transparency is measured through clarity of authorship, the sources you cite, and whether you disclose potential conflicts of interest. Consistency matters because sources that remain reliable over time generate more trust than those that swing wildly.

And for YMYL topics (Your Money or Your Life) — health, finance, safety — trust requirements are particularly strict. LLMs are programmed to be extremely cautious with this type of content. If your site covers YMYL topics without strong trust signals, you're playing at a severe disadvantage.

Why E-E-A-T Matters More for LLMs Than for Traditional Search

It's always mattered, yes. But in the AI context, its importance multiplies. Let me explain why.

From Searching by Keywords to Searching by Credibility

In traditional SEO, a big part of the job was making sure your content included the words people were searching for. That's still relevant. But LLMs have introduced a profound shift: credibility-based selection.

When an LLM generates a response, it doesn't just match keywords to content and call it a day. It synthesizes information from multiple sources to build a complete answer. Throughout that process, it's constantly making decisions: which source do I trust? Which claim do I include? Which perspective takes priority? E-E-A-T directly influences every single one of those decisions.

The practical implications are significant. Content that ranks well on Google but has weak E-E-A-T signals can get shut out of AI responses entirely. And the reverse is true too: content with exceptional E-E-A-T can get selected even when it's not optimized for keywords in the traditional sense. We're moving from an era of being found to an era of being trusted.

How AI Synthesizes and Decides Who Gets Cited

Unlike traditional Google, which hands you a list of links and lets you choose, LLMs synthesize information into a single unified answer. That synthesis process changes the dynamics completely.

When multiple sources provide conflicting information, the AI has to pick who to believe. And what criterion does it use? E-E-A-T. Especially trustworthiness and authoritativeness.

On top of that, many AI systems now include citations alongside their responses. Those citations are incredibly valuable visibility opportunities. But when AI can choose between dozens of sources covering the same topic, it naturally gravitates toward those with the strongest credibility signals. Sources with weak E-E-A-T might get read and incorporated into the synthesis... without receiving citation credit. The worst of both worlds.

Practical Strategies to Optimize E-E-A-T for AI Search

Alright, we understand the why. Now let's get into the how. These are the strategies I use with my clients that consistently deliver the best results.

Create Author Entities That LLMs Can Recognize

This is one of the most effective strategies I know. It's not enough to stick your name at the bottom of an article. You need to build a complete digital identity that AI can trace and verify.

Start with detailed author pages that include your professional background, relevant credentials, areas of expertise, and links to your published work. Use the same name across all platforms so LLMs can connect the dots between your various online presences. Implement Person schema to give AI structured data about you that it can process easily.

And something a lot of people underestimate: build your presence on third-party platforms. Guest articles on industry publications, profiles on professional networks, contributions to recognized forums — all of that creates external validation that strengthens your author entity. When LLMs encounter your content, they can cross-reference those signals to confirm that your expertise claims are legitimate.

Build Topical Authority Through Content Clusters

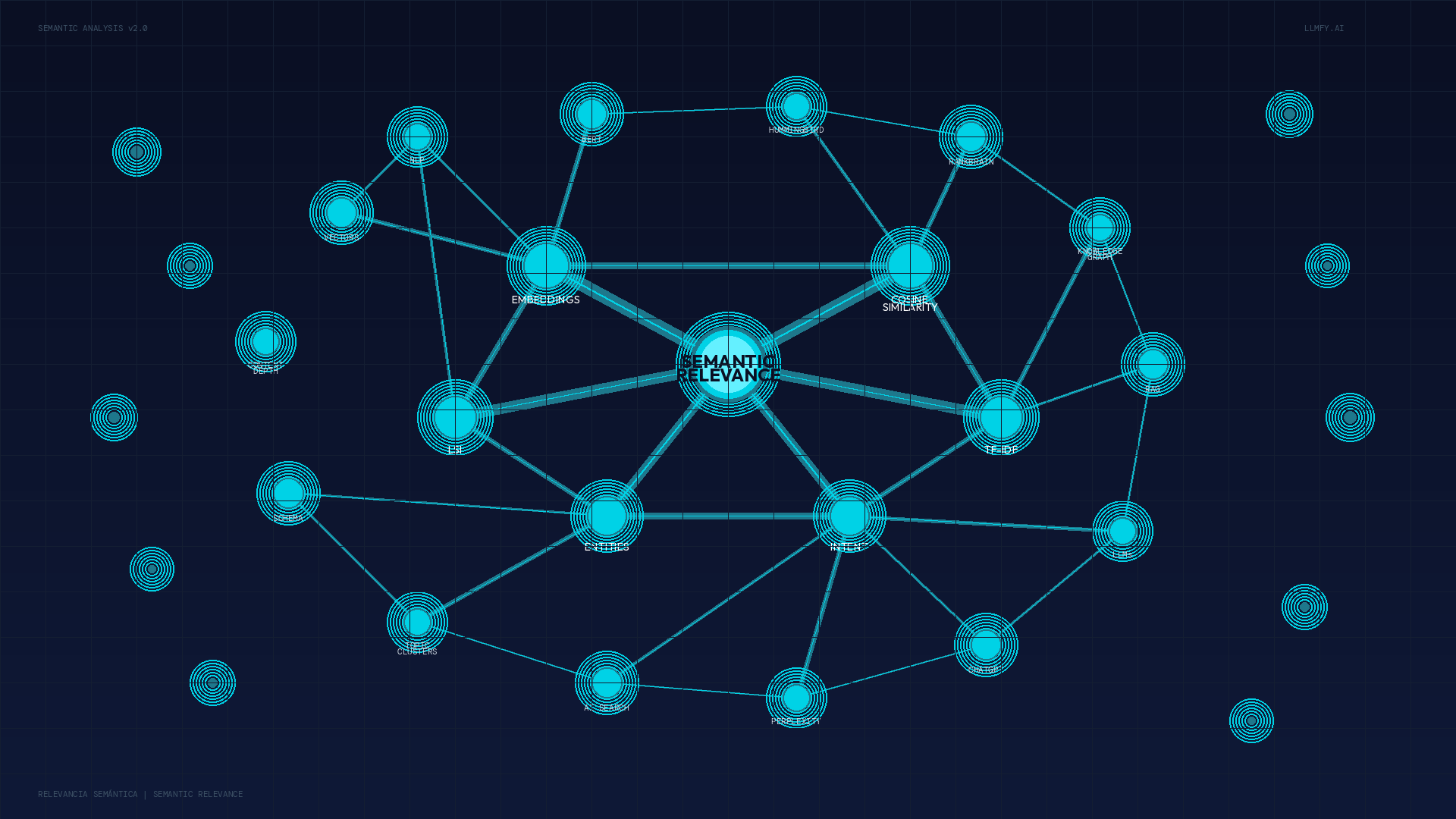

Topical authority — covering a subject thoroughly through interconnected content — sends very strong E-E-A-T signals to both Google and LLMs. This concept is closely tied to semantic relevance, which measures how well your content aligns with the actual meaning behind searches.

Instead of creating standalone pages, develop complete clusters. A pillar page with a comprehensive overview of the topic, supported by detailed articles exploring each subtopic in depth. All strategically interlinked, forming a network that demonstrates systematic coverage.

For LLMs this is especially important. When AI looks for comprehensive answers to complex questions, it leans naturally toward sources that have demonstrated the ability to address a topic from multiple angles with consistent expertise. Standalone pages that only touch on topics in passing simply can't compete.

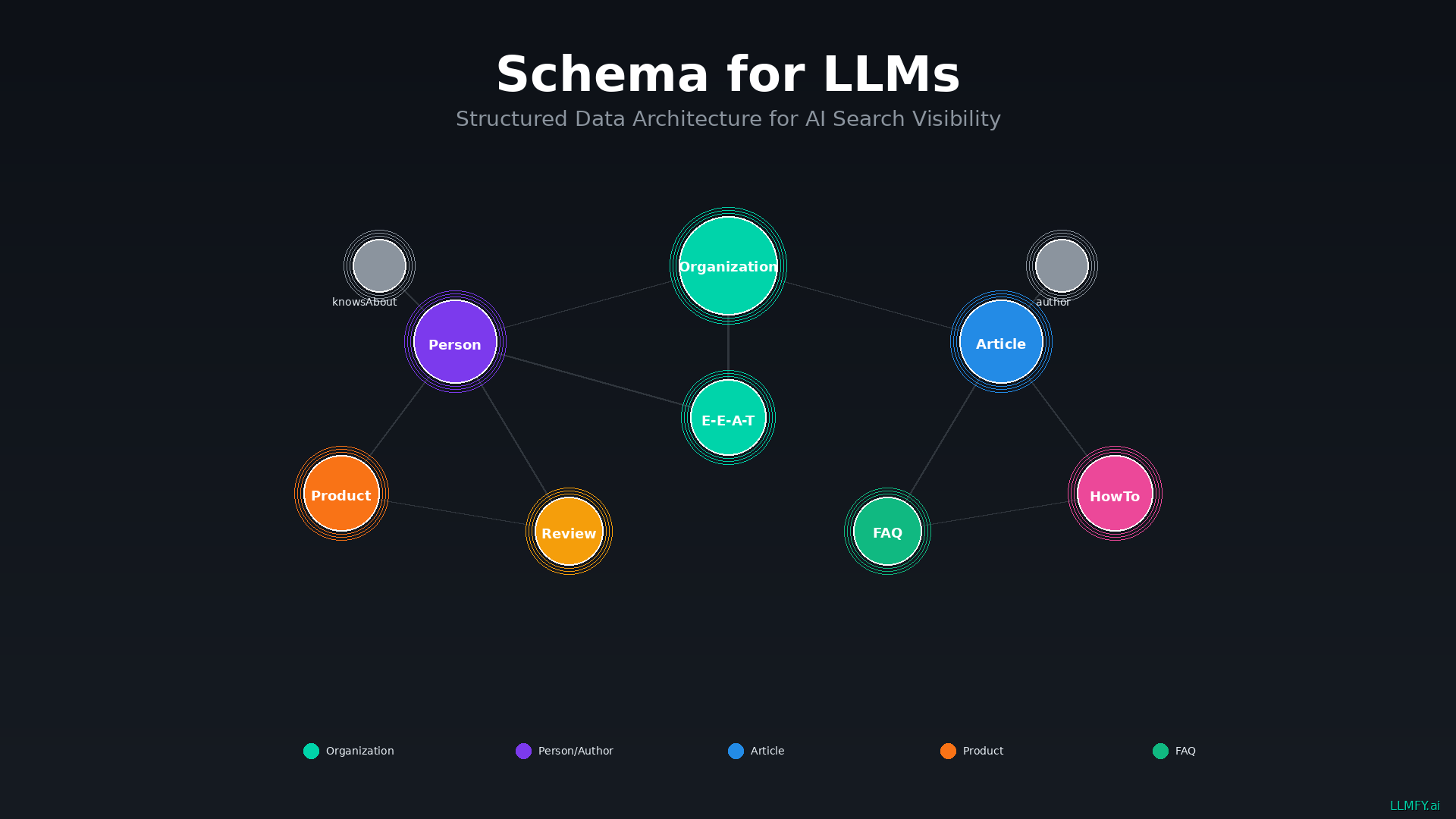

Leverage Schema Markup to Communicate E-E-A-T Signals

Structured data is your way of speaking directly to AI systems in a language they understand without ambiguity.

The most relevant schema types for E-E-A-T: Organization (business credentials, founding date, certifications), Person (author credentials, expertise areas, affiliations), Article (publication dates, authorship, publisher), and Review/Rating (social proof and user trust).

I recommend implementing comprehensive schema across your entire site, not just on pages where you want rich snippets. For a detailed implementation guide, we've got this: Schema for LLM: The Complete Guide to Structured Data for AI Search Engines.

Earn Quality Backlinks and Mentions Aimed at AI Visibility

Backlinks and mentions from authoritative sources remain powerful E-E-A-T signals in the AI era. When a reputable website links to your content or mentions your brand as an authority, that external validation significantly influences how LLMs perceive your credibility.

But the nature of valuable backlinks is evolving. For LLM optimization, contextual relevance matters more than ever. A mention from a highly authoritative source within your specific niche weighs more than dozens of links from generic directories. LLMs can evaluate the topical alignment between whoever links and whoever gets linked.

Focus your efforts on earning recognition from sources that LLMs trust: established industry publications, educational institutions, government resources, recognized professional organizations. Those links amplify your E-E-A-T signals in ways that low-quality links simply can't match.

How to Measure and Monitor Your E-E-A-T Performance

Optimizing without measuring is like driving blindfolded. While E-E-A-T can't be reduced to a single number, several indicators give you a pretty clear picture.

Metrics That Work as E-E-A-T Proxies

Domain Authority and similar metrics, while imperfect, give you a sense of overall domain credibility. Branded search volume suggests your recognition and authority are growing. Backlink quality metrics reveal whether authoritative sources are validating your expertise.

At the content level, pay attention to time on page and engagement metrics — if people stick around and read, that's a sign your content is meeting expectations. Return visitor rates suggest they found enough value to come back. Social shares and natural mentions indicate that others consider your content worth amplifying.

My recommendation: run regular E-E-A-T audits examining your author credentials and their visibility, content thoroughness and accuracy, backlink profile with emphasis on authority and relevance, engagement patterns, and competitive positioning within your niche.

Track Your Visibility in AI Responses

Perhaps the most direct measure of whether your E-E-A-T is working is checking if you actually show up in AI-generated responses. At LLMFY we've built tools specifically for this: tracking brand and content visibility across major LLM platforms.

What I recommend is regularly testing queries related to your core topics across ChatGPT, Claude, Perplexity, and Google AI Overviews. Note whether your brand appears, whether citations link to your content, and how you stack up against competitors. That data gives you direct intelligence for adjusting your strategy.

Real Results: E-E-A-T Success Stories in AI Search

Theory is fine, but results speak louder.

In our work with clients across various industries, we've seen consistent patterns linking E-E-A-T improvements to increased AI search visibility. The results are especially striking in competitive YMYL niches.

A financial services client implemented a comprehensive E-E-A-T optimization program: detailed author bios with verified credentials, thorough schema markup, and a content cluster strategy covering personal finance topics. Within four months, their content started appearing in 65% more AI-generated financial advice responses compared to baseline. And more significantly, they saw a 40% increase in direct citations from AI systems — visibility that traditional SEO metrics wouldn't even capture.

A healthcare content publisher saw similar results after strengthening E-E-A-T signals through medical professional authorship attribution, documented peer review processes in content methodology sections, and authoritative medical source citations. Their visibility in health-related AI responses improved by 78%, with particularly strong performance on queries where accuracy and trust are absolutely paramount.

The takeaway from these cases is clear: investing in E-E-A-T translates directly to LLM visibility. Better author identification, more rigorous content, stronger external validation — it all pays dividends across both traditional and AI search.

Conclusion: E-E-A-T Is No Longer Optional

Look, I can sum it up in one sentence: in a world where AI chooses who to cite, E-E-A-T is your calling card.

Experience, Expertise, Authoritativeness, and Trustworthiness are no longer abstract quality concepts. They're the practical factors that determine whether your content gets selected, cited, and amplified by language models. And the professionals and businesses that invest in optimizing these signals today will have an enormous competitive edge as AI search keeps growing.

At LLMFY, our E-E-A-T Analyzer tool evaluates your website's Experience, Expertise, Authoritativeness, and Trustworthiness signals as perceived by LLMs. It examines your author entities, content depth, backlink authority, schema implementation, and brand mentions to give you an actionable E-E-A-T score.

→ Start your free E-E-A-T Analysis and discover exactly how AI search engines perceive your content's credibility.

Sources

- Google Search Quality Evaluator Guidelines - https://guidelines.raterhub.com/

- Google Search Central: Creating Helpful, Reliable, People-First Content - https://developers.google.com/search/docs/fundamentals/creating-helpful-content

- Schema.org Official Documentation - https://schema.org/docs/documents.html

- Google Search Central: E-E-A-T and Quality Rater Guidelines - https://developers.google.com/search/blog/2022/12/google-raters-guidelines-e-e-a-t

- Anthropic Research on AI Content Evaluation - https://www.anthropic.com/research

- OpenAI Documentation on Content Quality - https://platform.openai.com/docs/

- Microsoft Bing Webmaster Guidelines - https://www.bing.com/webmasters/help/webmaster-guidelines-30fba23a

- Web Data Commons: Structured Data Analysis - https://webdatacommons.org/

Jesus LopezSEO

LLMO Expert & Founder of LLMFY

SEO expert with over 18 years of experience. Pioneer in LLMO (Large Language Model Optimization) and founder of Posicionamiento Web Systems. Helping companies optimize their presence in traditional search engines and AI search engines.