Semantic SEO is what decides whether your content exists for Google, ChatGPT, and Perplexity, or whether you're invisible. That simple. And no, I'm not talking about cramming keywords like it's still 2012. I mean something far more serious: making your content actually understand the meaning behind every search and answer it the right way.

I've been doing SEO since 2007. I've watched Google go from a glorified directory to a system that reads language almost like a person, thanks to Hummingbird, RankBrain, and BERT. Now LLMs jumped into the mix, and we're rewriting the playbook again.

What still gets me is seeing clients walk in optimizing like it's 2015. And some agencies too, sadly.

In this guide you'll learn:

- What semantic SEO actually is and why it's the foundation of modern visibility

- How search engines and LLMs process your content at a semantic level

- 7 proven strategies to master semantic SEO in your niche

- The mistakes silently killing your visibility

- How to measure and improve your semantic score step by step

What Is Semantic SEO and Why Should You Care?

Semantic SEO means aligning your content with the meaning and real intent behind a search, instead of obsessing over the exact-match keyword.

A real example I bring up in client meetings all the time. Someone searches "best laptop for college students." They don't want a page that repeats those words 47 times. They want to know if it fits a tight budget, if it's light enough to lug around campus every day, if the battery survives a morning at the library without an outlet. That's semantic SEO: your content actually understanding the query, not memorizing the words.

Google famously calls it "things, not strings." My version is blunter: stop thinking about isolated keywords and start thinking in semantic fields and SEO entities.

The Evolution of Semantic SEO: From Keywords to Meaning

Google's been marching toward natural language understanding for years. Each step has reinforced why semantics matter:

- 2013, Hummingbird: The algorithm that finally let Google understand complex, conversational queries, not just individual words strung together. A genuine turning point.

- 2015, RankBrain: Brought machine learning into the mix to interpret searches Google had literally never seen before. Imagine making sense of questions nobody has ever asked. That's what RankBrain was built for.

- 2019, BERT: Changed how Google understands context inside a sentence. Prepositions, negations, subtle nuances. Suddenly all of that mattered.

These advances in NLP (natural language processing) rewrote the playbook. Google no longer looks for text matches. It looks for meaning matches. That's where semantic SEO becomes non-negotiable.

Why Semantic SEO Matters More Than Ever

ChatGPT, Perplexity, Claude. These systems don't work like regular Google. They don't do keyword matching. They interpret what you actually want to know, then hunt for content that genuinely answers that. Semantic SEO taken to its logical extreme.

When someone asks Perplexity "how do I improve my website's E-E-A-T," the system isn't searching for pages with that exact phrase. It's looking for content that demonstrates real expertise, gives advice you could implement tomorrow morning, and cites sources that aren't fluff. That's content that's semantically relevant in a deep, substantive way.

The data backs this up:

- 85% of businesses are already investing in AI-powered SEO

- Top-3 pages use 53% more semantically related terms

- Over 75% of searches are now influenced by semantic technology

Either you adapt or you disappear from the results that matter.

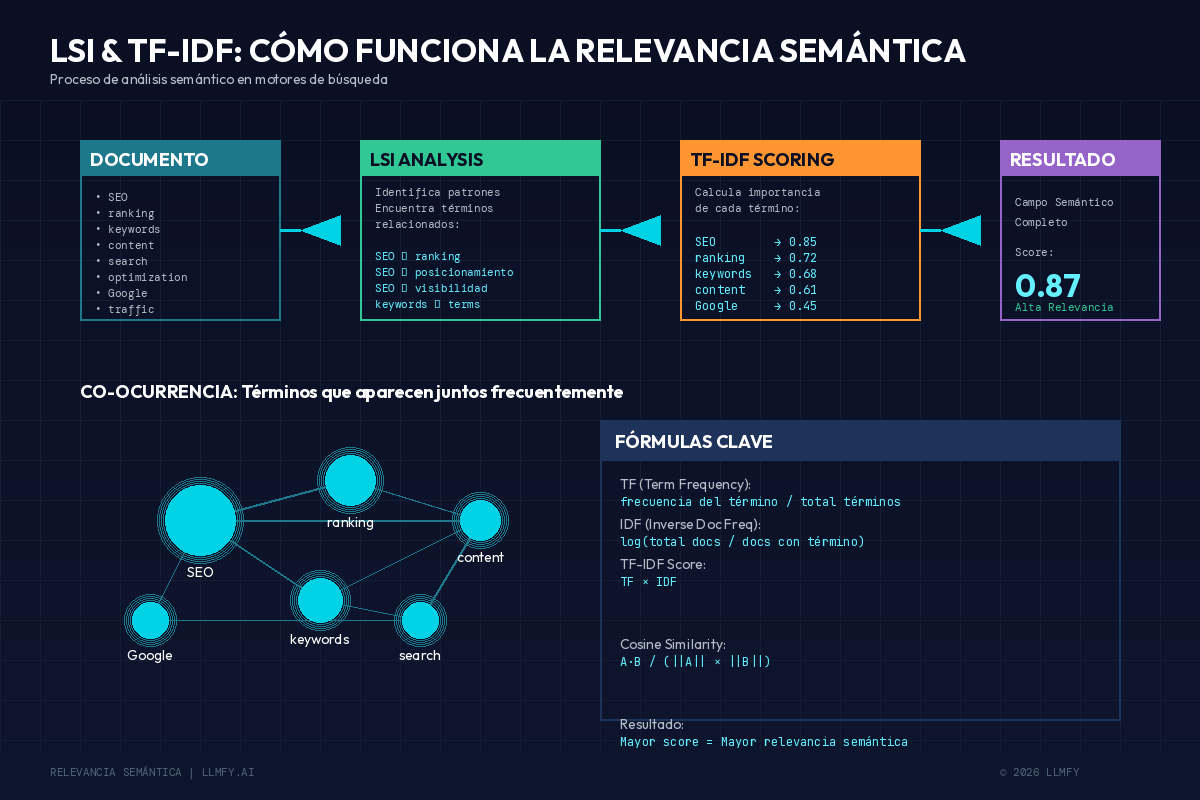

How LSI (Latent Semantic Indexing) and TF-IDF Work

Google has been using semantic concepts for years, and most SEOs still don't grasp them. Worth getting clear before we get into LLMs.

LSI (Latent Semantic Indexing)

Latent Semantic Indexing is a technique that identifies relationship patterns between terms and concepts. In practice, this means Google understands that an article about electric cars should mention terms like battery, range, charging, and Tesla.

If your content on EVs doesn't mention these terms, Google doubts its depth. Fair call. An EV article without the word "range" is like a Valencian paella recipe that forgets the rice.

TF-IDF (Term Frequency — Inverse Document Frequency)

TF-IDF measures a word's importance to a document relative to a collection of documents. Google uses variations of this formula to figure out which terms are common in content about a topic, which are distinctive, and whether your content covers the semantic field or comes up short.

Many SEOs don't even know it exists, and it's been deciding their content's depth for years.

Co-occurrence and Semantic Relationships

Co-occurrence is the number of times two or more words appear together in the same context. Google has been using this for years to map semantic relationships between concepts.

For example, if "SEO" and "ranking" frequently appear together across millions of documents, Google understands they're intimately related concepts. Your content needs to reflect these natural relationships if you want semantic SEO working in your favor.

How Google combines LSI (relationship patterns between terms) and TF-IDF (relative weight of each word) to evaluate whether your content covers the full semantic field of a topic.

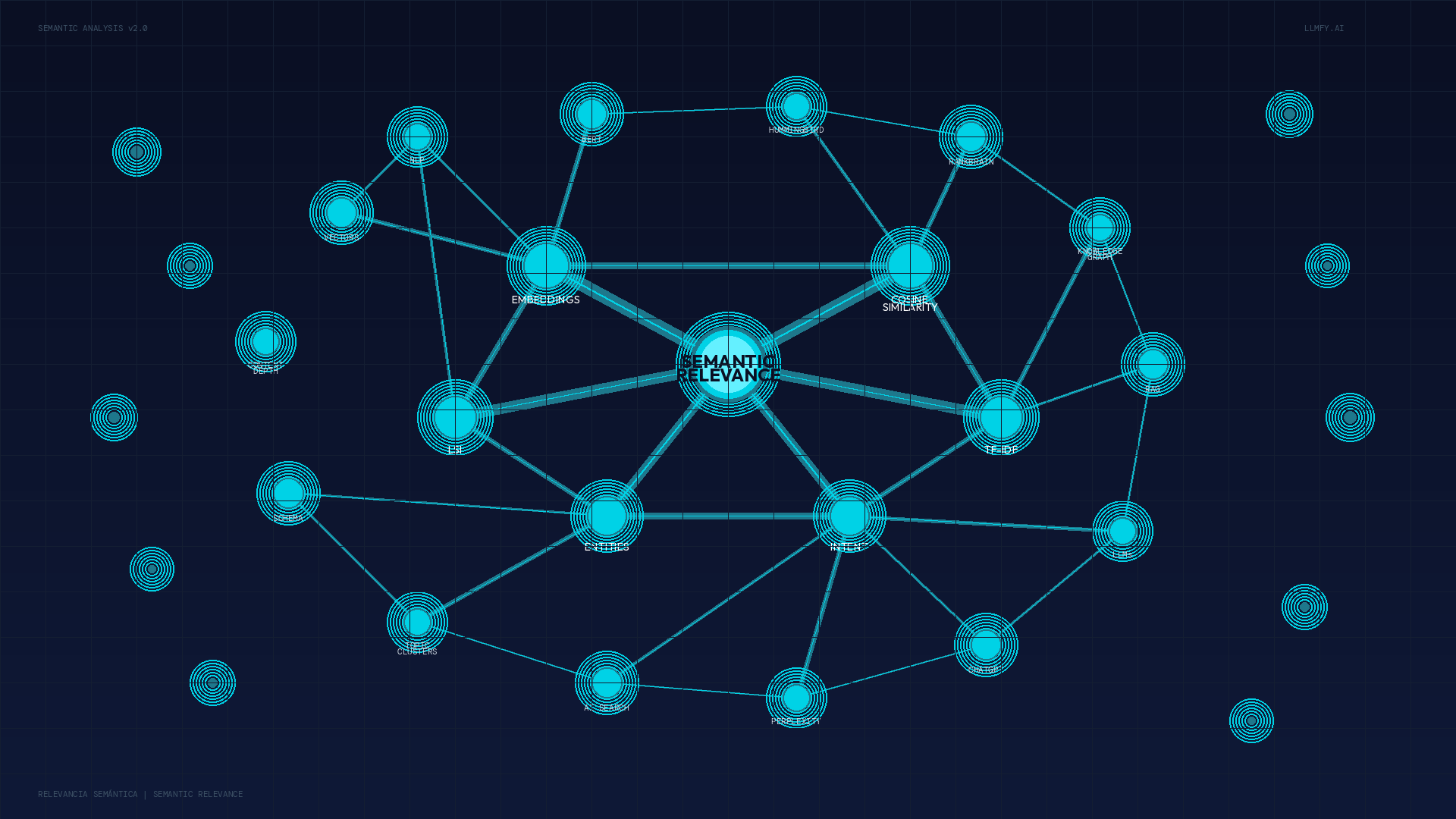

How Search Engines (and LLMs) Process Your Content

Let me simplify it. Three layers:

Conceptual map of semantic SEO components: embeddings, cosine similarity, LSI, TF-IDF and SEO entities connected in a meaning network.

First layer, entity recognition. When you write "Apple" in your content, the system has to figure out whether you mean the Cupertino company, the fruit, or the Beatles' record label. It does this by analyzing context and the SEO entities surrounding the term. A paragraph mentioning "iPhone," "Tim Cook," and "Silicon Valley" alongside "Apple" makes things clear.

Second layer, relationship mapping. Google's Knowledge Graph contains over 500 billion connected facts. Your content gains semantic strength when it accurately reflects these real-world relationships. Picture a giant map of who goes with whom. Your content has to fit inside.

Third layer, intent matching. The system tries to guess what's behind every search using semantic signals. Want to buy? Want to learn? Comparing before deciding?

If your content satisfies the three layers, you've won. Miss any of them and it doesn't matter how often you repeat the main keyword.

Query Fan-Out: Why a Single Search Triggers Twenty

One of the most overlooked concepts in modern semantic SEO is query fan-out. And it's where Google AI Mode and Gemini are pulling ahead.

The idea is simple. When someone asks Google AI Mode "how do I build a semantic cluster for my blog?", the system doesn't run one search. It runs many in parallel:

- what is a topical cluster?

- examples of topical clusters in SEO

- tools to map LSI keywords

- pillar page structure

- internal linking between cluster and pillar

- common mistakes in topical clusters

This is called query fan-out: a single user query opens up into multiple subqueries that get resolved in parallel. The LLM then synthesizes a response by combining content from those different searches.

What does this mean for your semantic SEO? Your content needs to be able to surface in any of those subqueries, not just the main one. If you only cover "what is a topical cluster" but never mention pillar pages, internal linking, common mistakes, or LSI tools, you're out of 80% of the fan-out. And therefore out of the synthesized response.

How to Optimize for Query Fan-Out

Three things that work, tested with clients:

- Cover adjacent questions in the same article. If you write about "topical clusters," include a brief section on how LSI keywords are mapped, what tools to use, and the classic mistakes. Each section becomes a landing point for a subquery.

- Connect topical clusters across articles. If your pillar page doesn't link to 5-10 articles deepening subtopics, the query fan-out doesn't find the additional context and prefers a site that does have it.

- Contextualize your keywords. Repeating "semantic SEO" 40 times isn't enough. You have to show search context: what it's for, how to measure it, how it differs from SEO 10 years ago, what tools work it. The richer the context, the more fan-out subqueries land on your page.

Search context is to 2026 what keyword density was to 2010. It matters, but it matters in a totally different way than what many SEOs are still measuring.

Semantic SEO for Google AI Mode, Gemini, and Voice Search

A question I get repeatedly in consulting: "Do I need to optimize differently for Google AI Mode versus Gemini or ChatGPT?" Short answer: the foundations of semantic SEO are the same. The priorities change.

Google AI Mode

Google AI Mode (the evolution of AI Overviews) uses aggressive query fan-out and prioritizes domains with topical authority demonstrated through cluster structure. If your site has a pillar page with 10 satellite articles linked, AI Mode reads it as an ecosystem and increases your citation probability. Orphan pages, however good, have a much harder time here.

Gemini

Gemini, embedded in the Google ecosystem, prioritizes freshness and verifiable E-E-A-T signals. It penalizes content that doesn't get updated and favors authors with Knowledge Graph presence. For Gemini, keeping your articles alive (with visible changelogs, update dates, and new sections) carries more weight than in other LLMs.

Voice Search and AI Search

Voice search and AI search are close cousins in intent: both use natural language, often start with interrogatives ("how," "why," "when"), and expect direct answers in under 30 words. The difference is the output format: voice is text-to-audio, AI is text-to-text.

To cover both:

- Put the answer in the first sentence after the H3.

- Use the exact question as the heading (don't paraphrase it).

- Avoid long lists in conversational answers: voice doesn't read them well.

Every well-structured FAQ covers AI Mode, Gemini, voice, and AI search at once. One structure, four platforms.

The RAG Process: How LLMs Evaluate Your Semantic SEO

RAG stands for Retrieval-Augmented Generation. The LLM first searches for semantically relevant content and then generates its response based on what it found. It doesn't make things up (or shouldn't); it builds answers from sources.

The process, step by step:

- Your content gets converted into a numerical vector (an embedding). Think of it as coordinates that place your text in a meaning space.

- When someone asks a question, that question also becomes a vector.

- The system compares both vectors using cosine similarity. If they point in similar directions, your content has high semantic relevance for that query.

- The LLM uses the semantically closest content to build its answer.

If your content is shallow, its vector is weak. A weak vector means low semantic relevance, which means you don't get retrieved, which means you don't exist for AI.

That simple. That harsh.

Embeddings and Cosine Similarity: The Science Behind Semantic SEO

I know this sounds like math class. Stick with me. It's worth it.

The RAG (Retrieval-Augmented Generation) flow: the LLM converts the query and the content into vectors, compares them by cosine similarity, and uses the closest ones to build the response.

An embedding is a numerical representation of what your text means. Words with similar meanings produce similar vectors. "Happy" and "joyful" point in almost the same direction. "Happy" and "photon" point at entirely different galaxies.

Cosine similarity measures the angle between these vectors:

- 1.0 = perfect semantic match

- 0.0 = no semantic relationship

- -1.0 = opposite meanings

I'm telling you this because content with strong semantic SEO produces more robust embeddings. Robust embeddings mean more chances of getting retrieved and more visibility in AI.

Poor content = weak embedding = no semantic SEO = invisible to AI.

That's why we built a tool at LLMFY that measures exactly this: your semantic relevance score against your real competitors. Try it free at llmfy.ai/dashboard and see where you're falling short.

7 Strategies to Master Semantic SEO (Tested on Real Projects)

Seven things I've been applying with my agency clients for years. With data to back them up, they move the needle.

1. Build Topic Clusters, Not Isolated Pages

A pillar page about "Technical SEO" should link to content about page speed, Core Web Vitals, sitemaps, robots.txt, and JavaScript rendering. All interconnected like a web.

Each piece in the cluster reinforces the others. Internal links build semantic bridges that algorithms recognize and reward. Picture a well-planned neighborhood: every house gains value when the area is taken care of.

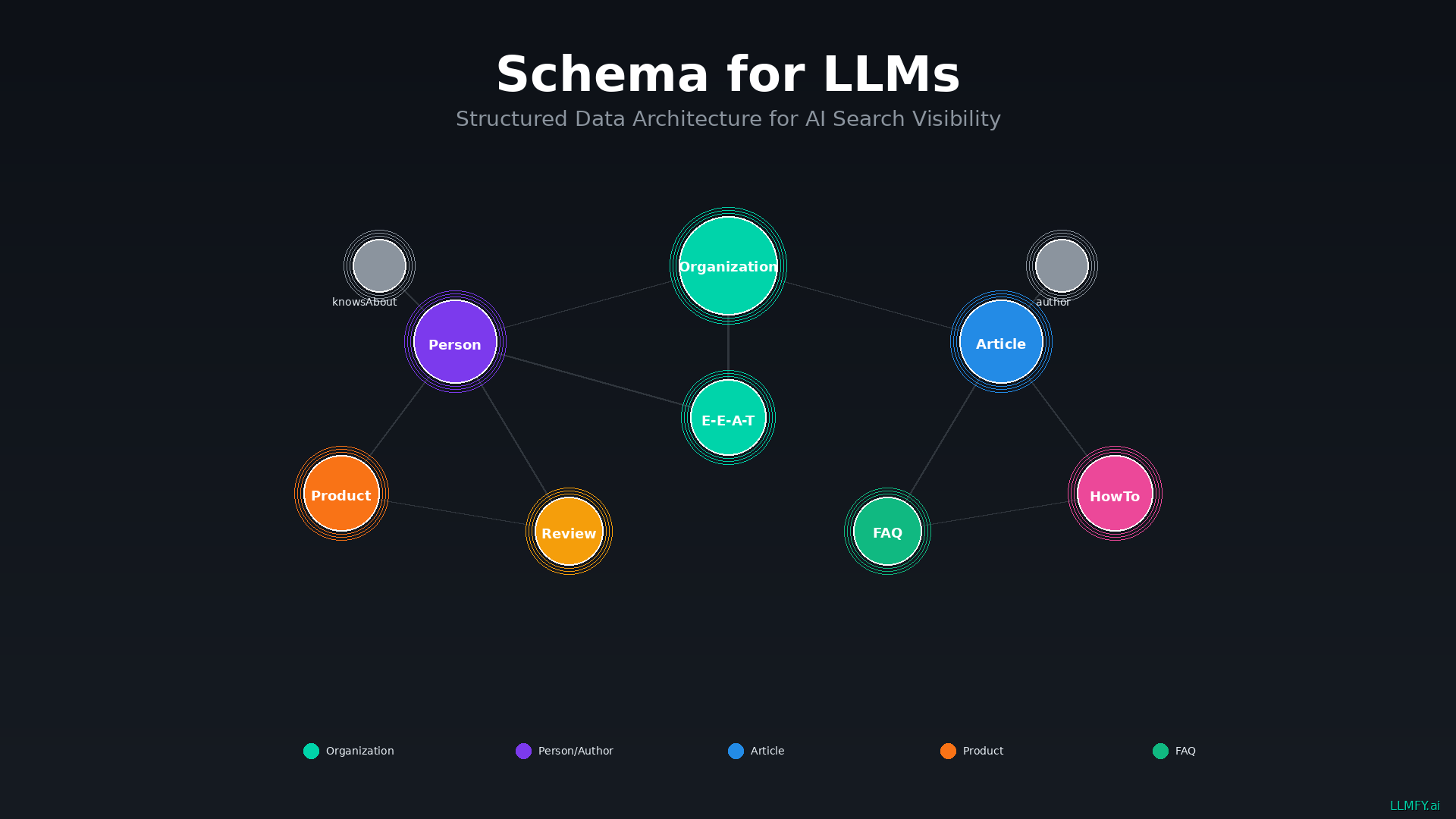

2. Implement Entity-Focused Schema Markup

Structured data is your way of speaking directly to search engines in their own language. Article, HowTo, FAQPage, Product, Organization, and so on.

Schema helps Google and LLMs understand the SEO entities in your content and the relationships between them. A basic piece of semantic SEO that too many people still ignore.

If you want to dig deeper, we have a specific guide: Schema for LLM.

3. Cover the Complete Semantic Field

Shallow content doesn't compete. Period.

Before writing anything, ask yourself three questions: what LSI terms should I include? What related entities? What does someone researching this topic actually need to know?

Remember the stat: top-3 pages use 53% more semantically related terms than those below them.

4. Use Precise, Consistent Terminology

Bad example: "Make your site faster. Speed matters. Fast pages rank better."

Good example: "LCP should be under 2.5 seconds and INP under 200ms. These Core Web Vitals metrics directly impact ranking."

The difference is huge. Terminological precision creates more robust embeddings. LLMs understand specific content far better than vague, hand-wavy content.

5. Include Original Data and Expert Opinions

LLMs prioritize authoritative content with real depth. If you're just summarizing what already exists on the internet, why would they cite you instead of the original source?

Original research, real client case studies, interviews with people on the ground, data your competition can't replicate tomorrow. That's what sets you apart. Be a primary source, not an echo of what everyone else is saying.

6. Structure Your Content for Semantic Extraction

Clear heading hierarchy, short paragraphs, descriptive subheadings. Well-structured content makes it much easier for algorithms to extract the semantic relationships in your text.

I've seen pages with excellent information that LLMs ignore because they're written as one wall of text. Structure is a technical requirement.

7. Connect to External Knowledge Bases

Reference Wikipedia where relevant, use Wikidata Q-IDs, link to recognized sources in your industry. The "sameAs" property in schema connects your entities to Google's Knowledge Graph.

Don't be afraid to link outward. It's a trust signal, not an authority leak. The "don't bleed link juice" idea died a long time ago.

What Still Works from Traditional SEO

Traditional SEO isn't dead. Technical SEO is still the foundation. Backlinks still count. UX matters more than ever.

What's shifted is the center of gravity:

| Before | Now |

|---|---|

| One keyword per page | Complete semantic coverage of the topic |

| Keyword density | Semantic field and natural language |

| Links for PageRank | Links for semantic authority |

| Meta keywords | Schema markup and entities |

| Content length | Semantic relevance and completeness |

And what you need to add: LLM optimization, citable content, simultaneous visibility on ChatGPT, Perplexity, and Google. You don't pick one or the other. You do both. Semantic SEO is the bridge between the Google world and the AI world.

How to Measure Your Semantic SEO Impact

What doesn't get measured doesn't get improved. Almost two decades later, still true.

Traditional indicators: rankings by topic (not individual keywords), organic traffic, featured snippets, Knowledge Panel appearances.

AI-specific indicators: how often LLMs cite you, AI Overview appearances, brand mentions in AI responses, semantic similarity scores against competitors.

LLMFY gives you exactly that. Try it free at llmfy.ai/dashboard.

Public Data: How AI Citability Is Evolving

What serious studies have surfaced over the past year:

| Finding | Data | Source |

|---|---|---|

| Google AI Overviews growth | +58% YoY; trigger on 48% of tracked queries (Feb 2025–Feb 2026) | BrightEdge AI Catalyst |

| Overlap between AIO citations and organic top 10 | Only 17% (5 out of 6 citations come from outside the top 10) | BrightEdge, 16-month tracking |

| Benefit of being cited in AIO | +35% organic clicks and +91% paid clicks vs non-cited pages | Search Engine Journal / BrightEdge |

| Origin of sources AI cites | Only 5–10% of citations come from the brand's own website | McKinsey AI Discovery Survey, Aug 2025 |

| Citation probability with entity coverage | Pages with 15+ connected entities: 4.8× higher selection probability | Wellows, 2026 |

My reading of this data, after applying it with real clients: ranking top 3 no longer guarantees AI cites you. Citation is earned through semantic coverage, structured data, and topical authority, not classic position. That's why we built LLMFY with a focus on platform-specific citability metrics, not just generic semantic relevance.

The 5 Mistakes Destroying Your Semantic SEO

Same patterns, year after year, client after client. See if any sound familiar.

Keyword stuffing. Repeating the same word 50 times doesn't improve your semantic SEO. It makes you look like spam and confuses algorithms about your actual semantic field. Like shouting your name at a party. It makes you annoying, not interesting.

Content that's too thin. 500 words on a complex topic doesn't have the depth to compete with comprehensive guides. I'm not saying every article needs to be an encyclopedia entry. If the subject demands depth, give it room.

Ignoring LSI terms. If you write about email marketing and never mention deliverability, segmentation, or automation, you're telling Google that your understanding of the topic is surface-level. And Google would be right.

Inconsistent terminology. Using three different terms for the same concept confuses semantic signals. Pick one way to refer to each concept and stick with it through the whole piece. Consistency is an underrated virtue.

No structure. Walls of text with no headings, no differentiated paragraphs, no visual organization. Practically impossible for an LLM to parse. Like handing in an exam without separating your answers.

Frequently Asked Questions About Semantic SEO

What is semantic SEO?

Semantic SEO is an optimization approach that aligns your content with the real meaning and intent behind a search, rather than targeting individual keywords. It covers the complete semantic field of a topic, works on entity relationships, and satisfies what the user genuinely wants to know.

How do LLMs evaluate the semantic relevance of my content?

LLMs convert your content into numerical vectors called embeddings and compare them against the user's query using cosine similarity. Content with higher semantic relevance (scores closer to 1.0) gets retrieved and used to construct responses.

Does semantic SEO replace traditional SEO?

No. Semantic SEO complements and amplifies traditional SEO. The technical fundamentals still matter: speed, indexability, quality backlinks. What changes is that the focus expands from individual keywords to comprehensive semantic coverage.

How does semantic SEO affect my visibility on ChatGPT?

A lot. ChatGPT, Perplexity, and similar systems use semantic understanding to decide which content to retrieve. If your content has high semantic relevance, its embeddings are stronger, and you're significantly more likely to be cited when a user asks a related question.

What's the relationship between LSI, TF-IDF, and semantic SEO?

LSI and TF-IDF are techniques search engines have been using for years to evaluate content's semantic relevance. LSI identifies terms that relate to each other; TF-IDF measures their relative importance within a collection of documents. Both determine whether your content semantically covers a topic in full.

How long until a semantic SEO strategy shows results?

Between 3 and 6 months for significant improvements, similar to traditional SEO. Building topical authority is long-term work that requires patience and consistency. There are no shortcuts, but the results tend to be far more durable.

What is an embedding and why does it matter for semantic SEO?

An embedding is the numerical representation of a text's meaning in vector form. It matters for semantic SEO because LLMs compare embeddings (not words) when deciding which content to cite. The richer and more specific your text, the more distinctive its embedding, and the more likely it is to be retrieved.

Can a short article rank well with semantic SEO?

Depends on the topic. For simple, very specific queries, a well-structured 800-word article can compete. For complex topics like semantic SEO, LLMO, or Core Web Vitals, you'll need somewhere between 1,500 and 3,000 words to credibly cover the semantic field.

Key Takeaways on Semantic SEO

After nearly two decades in SEO, here's what I'm dead sure about:

- Semantic SEO measures meaning, not word matching. That changes everything.

- Google has been evaluating semantic relevance with LSI, TF-IDF, Hummingbird, RankBrain, and BERT for years.

- LLMs use embeddings and cosine similarity to decide which content has real depth.

- Topic clusters build more authority than standalone pages.

- Covering the complete semantic field (LSI terms, entities, relationships) isn't optional anymore.

- Without data you're flying blind. Measure your performance.

- Traditional SEO and semantic SEO are complementary, not mutually exclusive.

The future of search is semantic. Honestly, it's not the future, it's already here.

Want to Measure Your Semantic SEO?

Stop guessing. Start measuring.

Analyze any URL free at llmfy.ai/dashboard. In under 5 minutes you've got your semantic relevance score, your content gaps, and concrete recommendations to fix it.

We're already over 2,000 professionals working on this. Your call.

Sources and References

This article is based on research from authoritative sources on semantic SEO and semantic relevance:

- Google Search Central - A Guide to Google Search Ranking Systems - Official documentation on BERT, RankBrain, and Neural Matching.

- Backlinko - Semantic SEO: What It Is and Why It Matters - Study of 11 million search results showing how "topically relevant" content impacts rankings.

- Search Engine Land - Semantic SEO: How to optimize for meaning over keywords - Complete guide on semantic optimization for Google and AI engines.

- Semrush - Semantic Search: What It Is and Why It Matters - Explanation of Google's Knowledge Graph with over 500 billion facts about 5 billion entities.

- Lumar - Semantic Search Explained: Vector Models' Impact on SEO - Google research paper on "Leveraging Semantic and Lexical Matching."

- Search Engine Journal - 7 Ways To Use Semantic SEO For Higher Rankings - Study of 2.5 million queries on "People Also Ask" feature.

- Holistic SEO - Importance of Lexical Semantics and Semantic Similarity - SEO case study with 30 websites on lexical semantics.

- BrightEdge Research - Study cited in Search Engine Land showing 82.5% of AI Overview citations point to pages with semantic depth.

- SEO by the Sea - Semantic Relevance of Keywords - Analysis of Google patent US 11,106,712 on semantic relevance of keywords.

Tags

Jesus LopezsEO

LLMO Expert & Founder of LLMFY

SEO expert with over 18 years of experience. Pioneer in LLMO (Large Language Model Optimization) and founder of Posicionamiento Web Systems. Helping companies optimize their presence in traditional search engines and AI search engines.